Several datasets are fostering innovation in higher-level functions for everyone, everywhere. By providing this repository, we hope to encourage the research community to focus on hard problems. In this repository, we present our severity rates (BIRADS) of clinicians while diagnosing several patients from our User Tests and Analysis 4 (UTA4) study. Here, we provide a dataset for the measurements of severity rates (BIRADS) concerning the patient diagnostic. Work and results are published on a top Human-Computer Interaction (HCI) conference named AVI 2020 (page). Results were analyzed and interpreted from our Statistical Analysis charts. The user tests were made in clinical institutions, where clinicians diagnose several patients for a Single-Modality vs Multi-Modality comparison. For example, in these tests, we used both prototype-single-modality and prototype-multi-modality repositories for the comparison. On the same hand, the hereby dataset represents the pieces of information of both BreastScreening and MIDA projects. These projects are research projects that deal with the use of a recently proposed technique in literature: Deep Convolutional Neural Networks (CNNs). From a developed User Interface (UI) and framework, these deep networks will incorporate several datasets in different modes. For more information about the available datasets please follow the Datasets page on the Wiki of the meta information repository. Last but not least, you can find further information on the Wiki in this repository. We also have several demos to see in our YouTube Channel, please follow us.

We kindly ask scientific works and studies that make use of the repository to cite it in their associated publications. Similarly, we ask open-source and closed-source works that make use of the repository to warn us about this use.

You can cite our work using the following BibTeX entry:

@inproceedings{10.1145/3399715.3399744,

author = {Calisto, Francisco Maria and Nunes, Nuno and Nascimento, Jacinto C.},

title = {BreastScreening: On the Use of Multi-Modality in Medical Imaging Diagnosis},

year = {2020},

isbn = {9781450375351},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3399715.3399744},

doi = {10.1145/3399715.3399744},

abstract = {This paper describes the field research, design and comparative deployment of a multimodal medical imaging user interface for breast screening. The main contributions described here are threefold: 1) The design of an advanced visual interface for multimodal diagnosis of breast cancer (BreastScreening); 2) Insights from the field comparison of Single-Modality vs Multi-Modality screening of breast cancer diagnosis with 31 clinicians and 566 images; and 3) The visualization of the two main types of breast lesions in the following image modalities: (i) MammoGraphy (MG) in both Craniocaudal (CC) and Mediolateral oblique (MLO) views; (ii) UltraSound (US); and (iii) Magnetic Resonance Imaging (MRI). We summarize our work with recommendations from the radiologists for guiding the future design of medical imaging interfaces.},

booktitle = {Proceedings of the International Conference on Advanced Visual Interfaces},

articleno = {49},

numpages = {5},

keywords = {user-centered design, multimodality, medical imaging, human-computer interaction, healthcare systems, breast cancer, annotations},

location = {Salerno, Italy},

series = {AVI '20}

}

The following list is showing the required dependencies for this project to run locally:

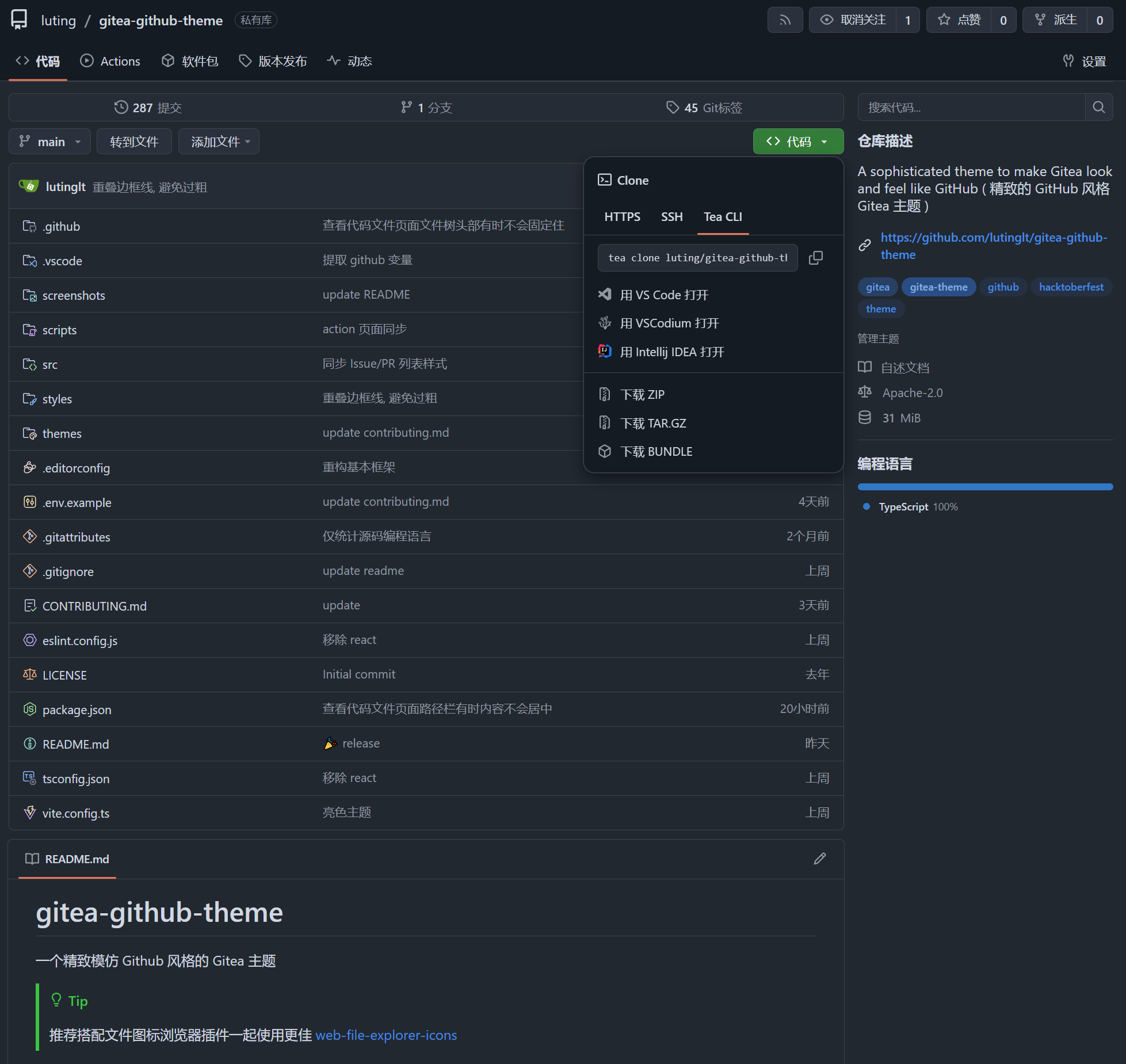

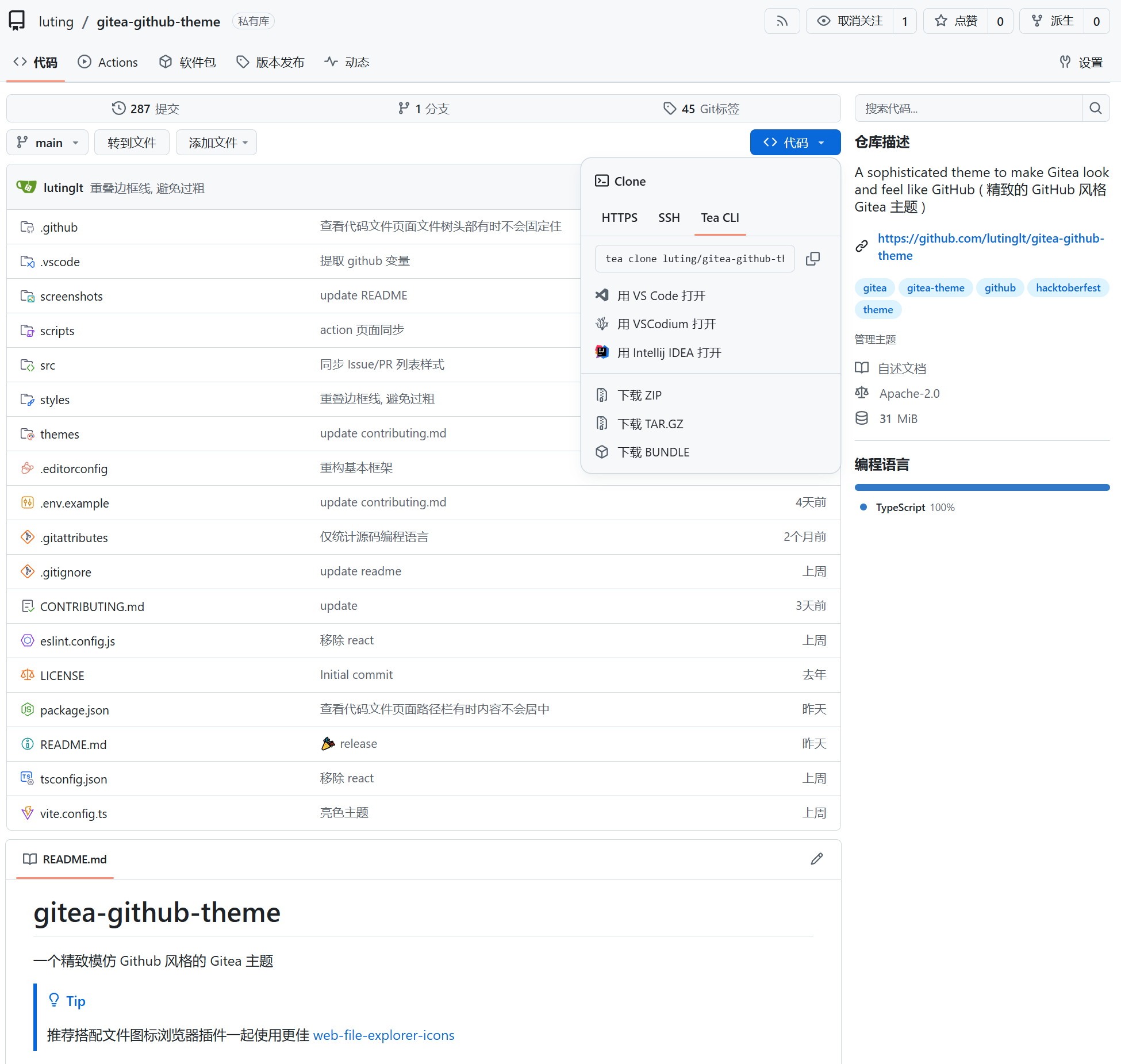

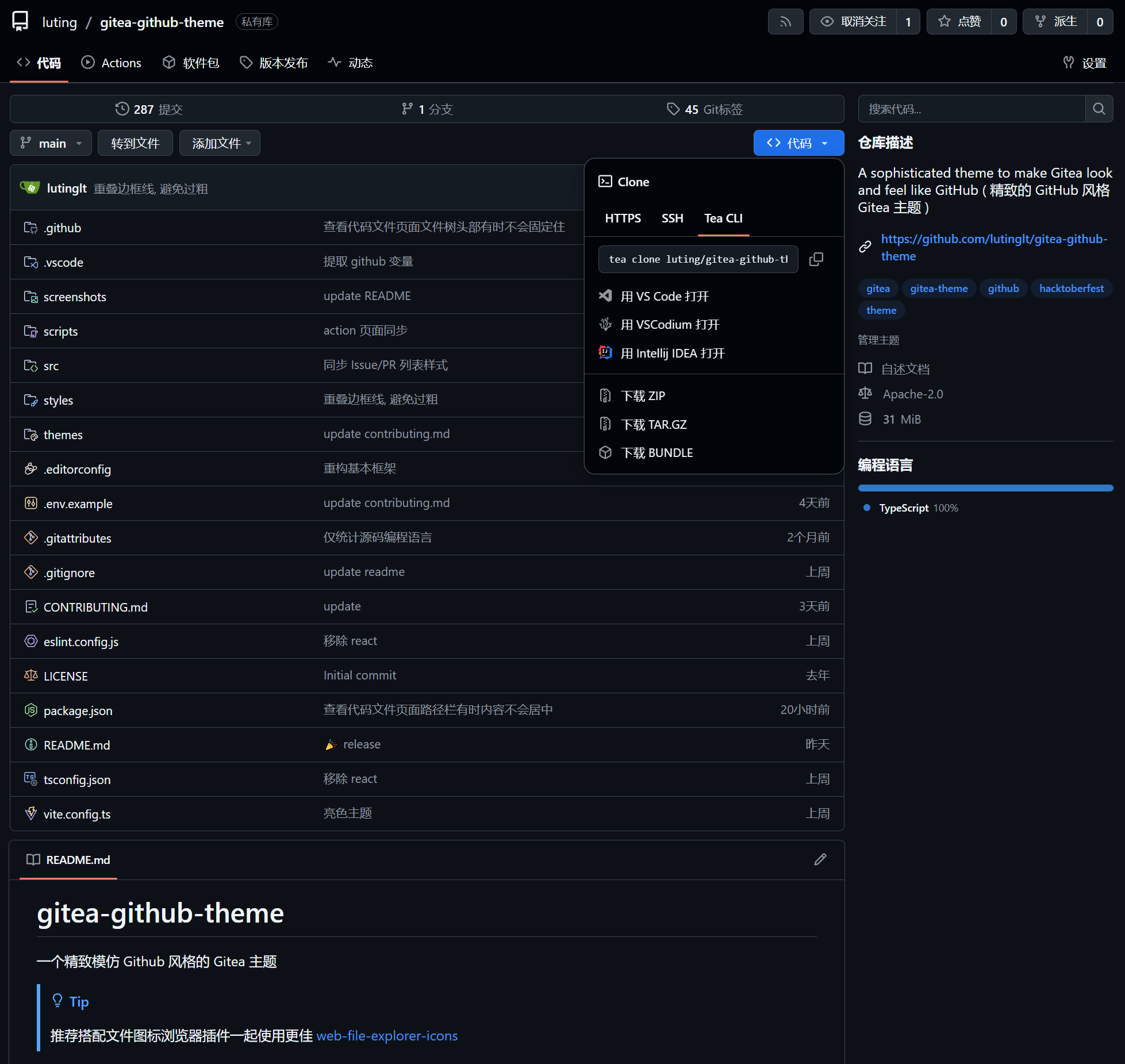

- Git or any other Git or GitHub version control tool

- Python (3.5 or newer)

Here are some tutorials and documentation, if needed, to feel more comfortable about using and playing around with this repository:

Usage follow the instructions here to setup the current repository and extract the present data. To understand how the hereby repository is used for, read the following steps.

At this point, the only way to install this repository is manual. Eventually, this will be accessible through pip or any other package manager, as mentioned on the roadmap.

Nonetheless, this kind of installation is as simple as cloning this repository. Virtually all Git and GitHub version control tools are capable of doing that. Through the console, we can use the command below, but other ways are also fine.

git clone https://github.com/MIMBCD-UI/dataset-uta4-rates.git

Optionally, the module/directory can be installed into the designated Python interpreter by moving it into the site-packages directory at the respective Python directory.

Please, feel free to try out our demo. It is a script called demo.py at the src/ directory. It can be used as follows:

Just keep in mind this is just a demo, so it does nothing more than downloading data to an arbitrary destination directory if the directory does not exist or does not have any content. Also, we did our best to make the demo as user-friendly as possible, so, above everything else, have fun! 😁

We need to follow the repository goal, by addressing the thereby information. Therefore, it is of chief importance to scale this solution supported by the repository. The repository solution follows the best practices, achieving the Core Infrastructure Initiative (CII) specifications.

Besides that, one of our goals involves creating a configuration file to automatically test and publish our code to pip or any other package manager. It will be most likely prepared for the GitHub Actions. Other goals may be written here in the future.

This project exists thanks to all the people who contribute. We welcome everyone who wants to help us improve this downloader. As follows, we present some suggestions.

Either as something that seems missing or any need for support, just open a new issue. Regardless of being a simple request or a fully-structured feature, we will do our best to understand them and, eventually, solve them.

We like to develop, but we also like collaboration. You could ask us to add some features… Or you could want to do it yourself and fork this repository. Maybe even do some side-project of your own. If the latter ones, please let us share some insights about what we currently have.

The current information will summarize important items of this repository. In this section, we address all fundamental items that were crucial to the current information.

The following list, represents the set of related repositories for the presented one:

To publish our datasets we used a well known platform called Kaggle. To access our project’s Profile Page just follow the link. For the purpose, three main resources uta4-singlemodality-vs-multimodality-nasatlx, uta4-sm-vs-mm-sheets and uta4-sm-vs-mm-sheets-nameless are published in this platform. Moreover, the Single-Modality vs Multi-Modality is available in our MIMBCD-UI Project page on data.world. Last but not least, datasets are also published at figshare and OpenML platforms.

Copyright © 2020 Instituto Superior Técnico

The dataset-uta4-rates repository is distributed under the terms of GNU AGPLv3 license and CC-BY-SA-4.0 copyright. Permissions of this license are conditioned on making available complete elements from this repository of licensed works and modifications, which include larger works using a licensed work, under the same license. Copyright and license notices must be preserved.

Our team brings everything together sharing ideas and the same purpose, developing even better work. In this section, we will nominate the full list of important people for this repository, as well as respective links.

- Hugo Lencastre

- Nádia Mourão

- Bruno Dias

- Bruno Oliveira

- Luís Ribeiro Gomes

- Carlos Santiago

This work was partially supported by national funds through FCT and IST through the UID/EEA/50009/2013 project, BL89/2017-IST-ID grant. We thank Dr. Clara Aleluia and her radiology team of HFF for valuable insights and helping using the Assistant on their daily basis. From IPO-Lisboa, we would like to thank the medical imaging teams of Dr. José Carlos Marques and Dr. José Venâncio. From IPO-Coimbra, we would like to thank the radiology department director and the all team of Dr. Idílio Gomes. Also, we would like to provide our acknowledgments to Dr. Emília Vieira and Dr. Cátia Pedro from Hospital Santa Maria. Furthermore, we want to thank all team from the radiology department of HB for participation. Last but not least, a great thanks to Dr. Cristina Ribeiro da Fonseca, who among others is giving us crucial information for the BreastScreening project.

Our organization is a non-profit organization. However, we have many needs across our activity. From infrastructure to service needs, we need some time and contribution, as well as help, to support our team and projects.

This project exists thanks to all the people who contribute. [Contribute].

Thank you to all our backers! 🙏 [Become a backer]

Sponsors

Support this project by becoming a sponsor. Your logo will show up here with a link to your website. [Become a sponsor]

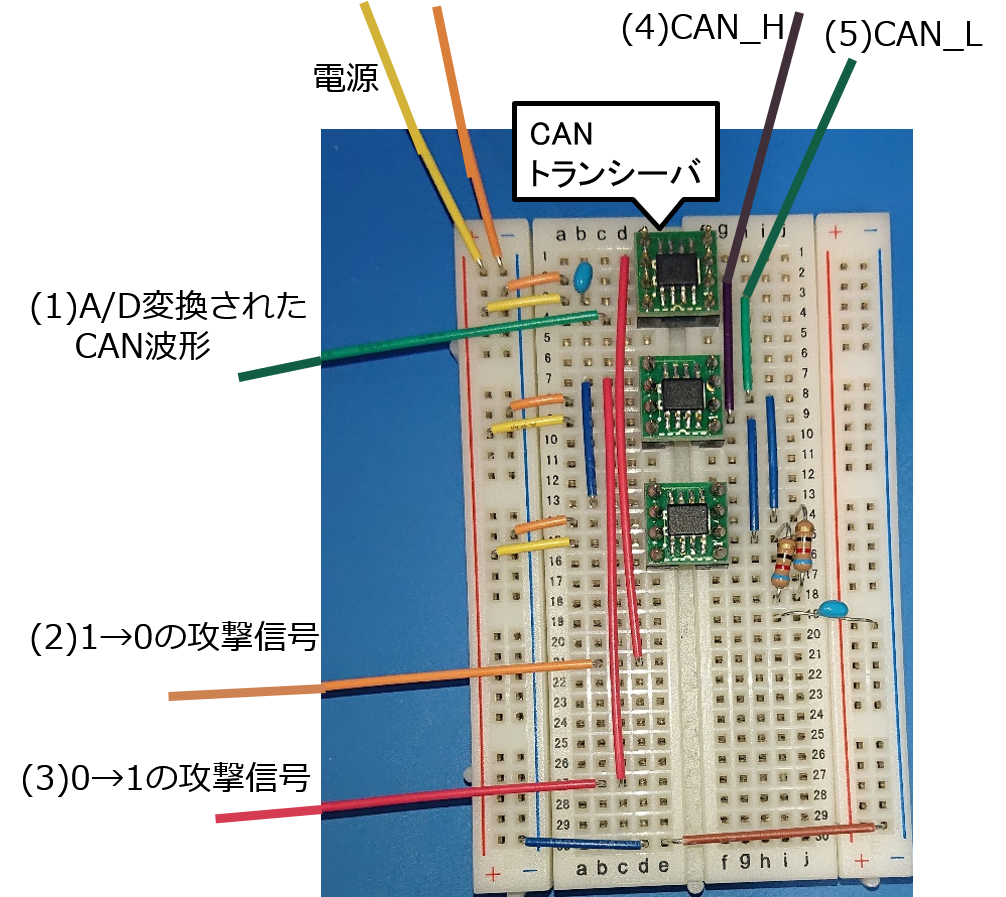

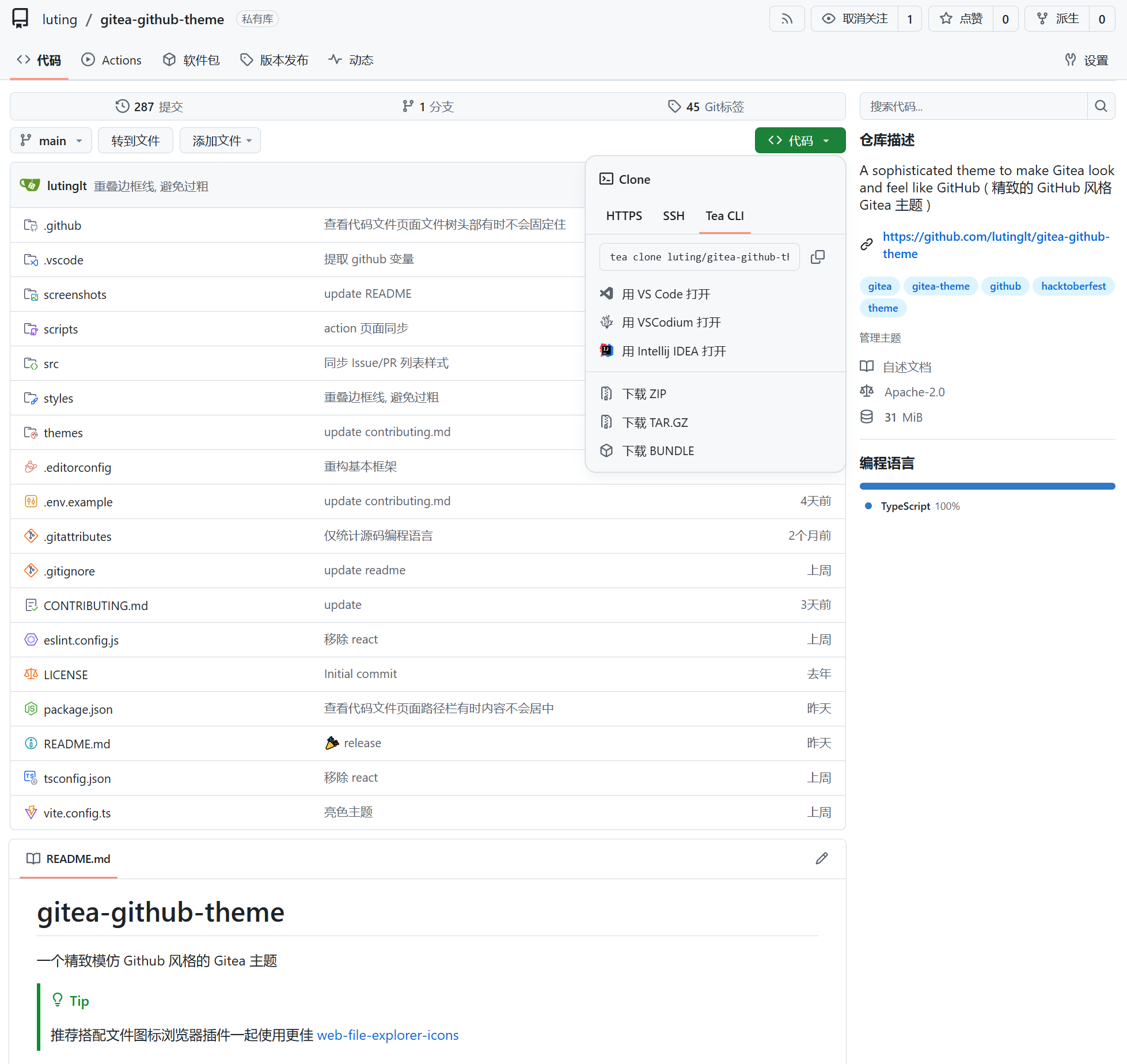

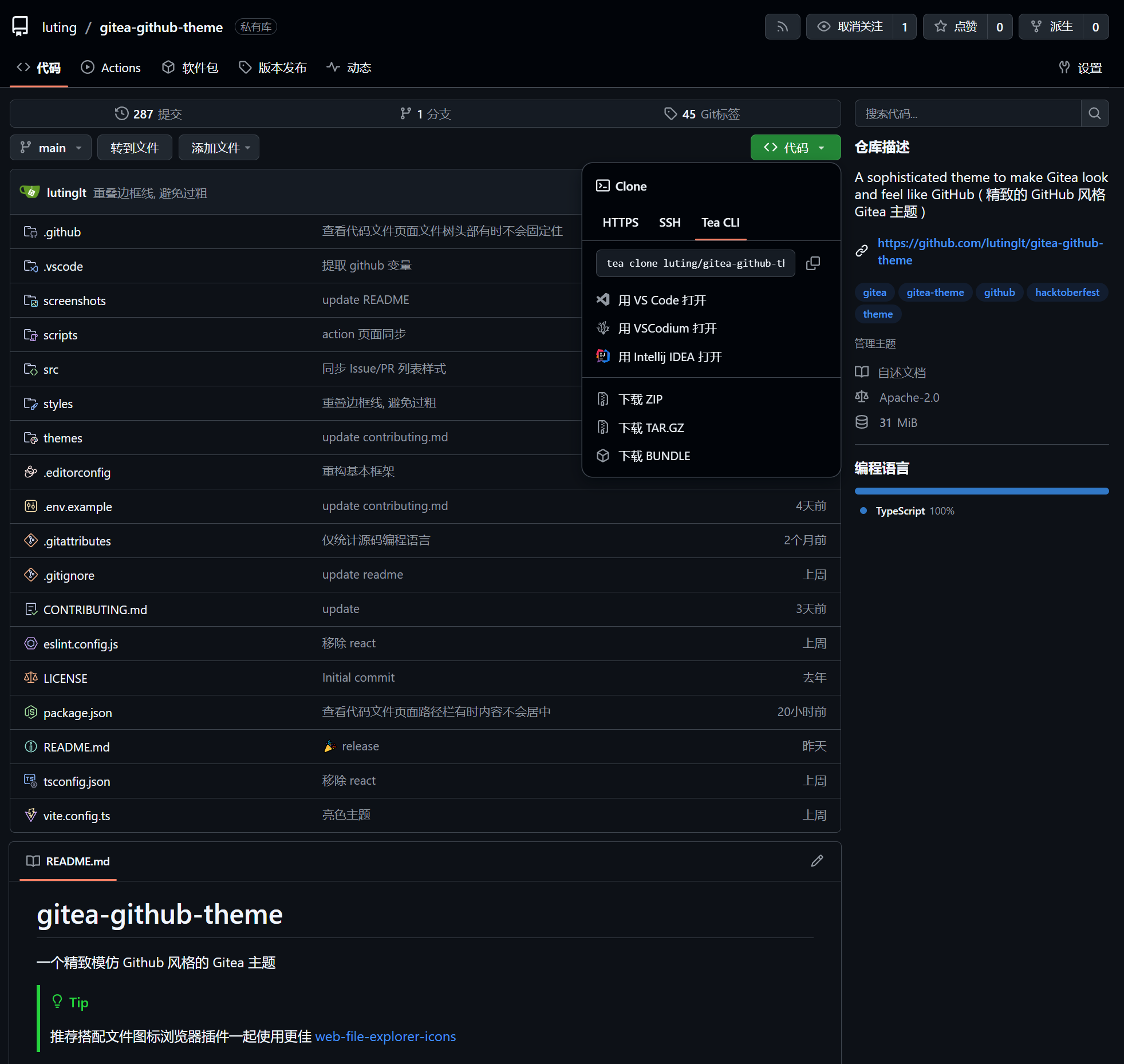

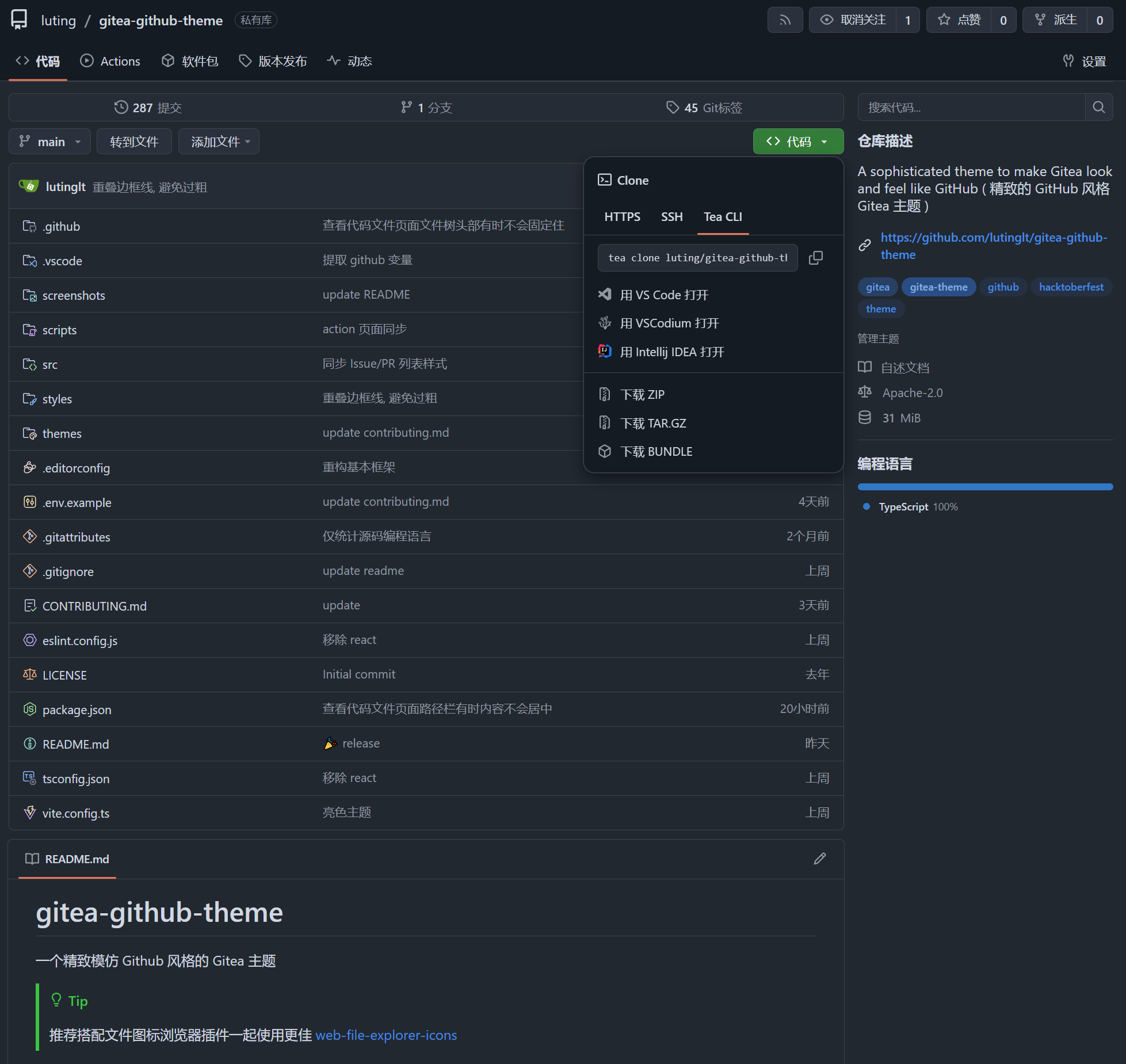

https://github.com/108yen/CAN_controller

https://github.com/108yen/CAN_controller